Interactive Live Streaming with Ultra-Low Latency

Oliver Lietz, CEO nanocosmos

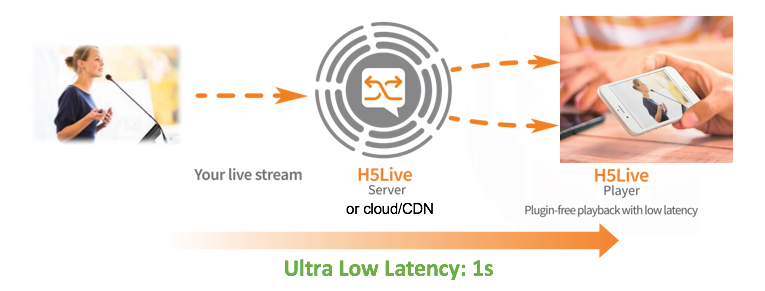

Interactive live streaming applications allow viewers to experience events around the world in real time. In this article, we will describe how these applications are created, how they achieve ultra-low latency levels, and how our nanoStream Cloud including the unique H5Live playback technology help users achieve their low-latency goals.

How can I create true interactive live streaming applications with ultra-low latency?

Interactive use cases are becoming more prevalent. Video is turning away from broadcast toward two-way or multi-way communication and webcasters and broadcasters want to be able to communicate directly in real-time or engage with their audiences by getting live feedback during presentations or events.

Live streaming of user-generated content on mobile devices or live communications tools requires a bidirectional connection with ultra-low latency (below one or two seconds), to achieve real time user experiences in environments like live auctions, gambling, webcasts, or sports.

Our nanoStream H5Live playback technology lets you build interactive applications with ultra-low latency, plugin-free on any browser and on any device.

ENCODING STREAMING PLAYBACK

What is latency?

Latency is the time between the capturing of a video frame with a camera and the playback of this same frame on another device (“glass-to-glass”). For internet streaming applications, latency is potentially added by all components in the streaming workflow: the camera/encoder, upstream network, streaming server, downstream network, or video player on the viewer side.

You might already have noticed this effect: when watching a live event like a football match over IPTV or a live stream over the internet and listening to the same match on the radio, you will notice that the video stream is several seconds behind the radio.

What latency values can I expect?

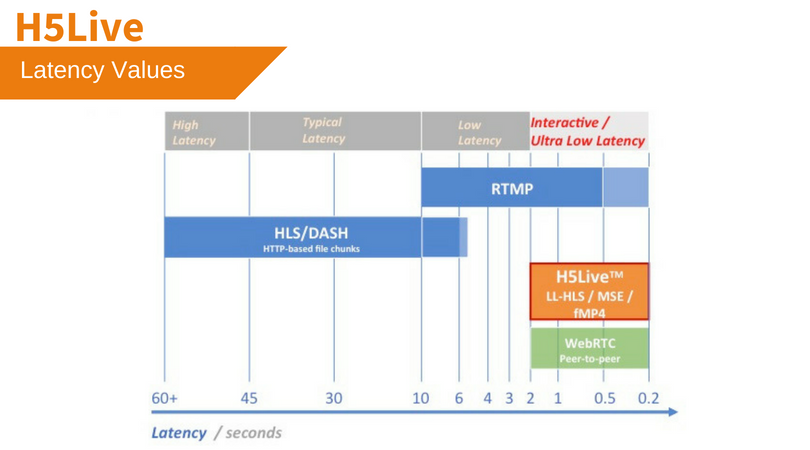

Standard video distribution protocols for live playback in web browsers, like HLS and DASH, are based on HTTP. Latency values can get very high, up to 30-60 seconds. When people talk about low latency, they usually mean turning latency down to 5-10 seconds.

However, this is still far too high for interactive live video applications, which need ultra-low latency below 2 seconds.

Latency Values

What is the reason for long latency times?

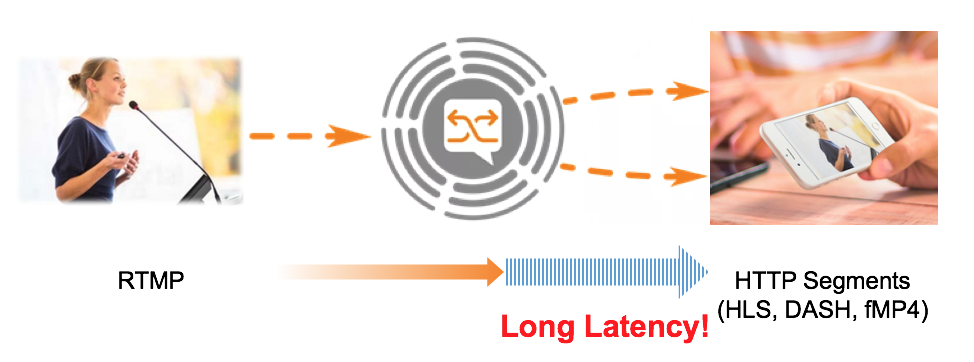

Every network transport creates latency. Sending packets around the world can take up to 1 second. But why is the video latency still much higher? Latencies are not only dependent on network infrastructure, but also on the video compression and packaging and delivery method used. Current playback technologies use HTTP segments pulled by the player from the streaming server. HLS segments are several seconds based on the concept of “key frames”.

All compression methods like H.264 use the concept of “grouping” pictures (GOP) into chunks with keyframes of every 2-10 seconds or more. With HLS, you usually combine 3 segments together to deliver to the player, this results in at least 6-30 seconds end-to-end latency. In the end, the player will see a playlist and file chunks which it loads over HTTP. Other technologies like MPEG DASH basically work with the same concept and have the same issues.

Additionally, latency will increase for network dropouts and instabilities. Interactive applications can not work under these conditions.

Live Encoding with RTMP; Segmented delivery / playback with HTTP/HLS/DASH

How can ultra-low latency be achieved for interactive applications?

A more “intelligent” delivery format is required to keep the latency low independent from constraints like GOP lengths. As there is no viable solution and wide-spread technology available, we decided to develop a new technology in-house, called H5Live. H5Live is compatible with all HTML5 browsers and runs plugin-free on any device.

Interactive applications, like live streamed auctions or bets, depend on and require ultra-low latency below 1 or 2 seconds for an approved buyer experience. In Flash-based environments on desktop browsers, the RTMP protocol was able to achieve latencies around two seconds in good network conditions. RTMP is still a viable protocol for in-app applications if you are using a latency-tuned software like nanoStream; but as browsers are disabling their support for Flash-based players, only HTTP based protocols remain available. These protocols like HLS and DASH cannot deliver a real time performance for live streams.

What is H5Live and how can we guarantee ultra-low latency live streaming and playback?

To respond to our customers’ needs for ultra-low latency use cases, the nanocosmos team invented nanoStream H5Live, the plugin-free HTML5 based stream delivery and playback technology.

nanoStream H5Live is a client-server delivery and playback solution based on HTML5 technologies, enabling ultra-low latency browser-based playout, plugin-free

on all HTML5 web browsers.

H5Live is integrated with nanoStream Cloud which includes all streaming services and APIs for you to create your own live streaming platform with ultra-low-latency.

How do I use H5Live on my own web page?

H5Live player is a light-weight Javascript library which can be easily embedded onto your own web page, with just a few lines of code. See our H5Live demo page and documentation for further information.

How does H5Live work?

H5Live is a unique live stream segmentation, delivery and playback technology developed by nanocosmos, in view of keeping the stream formats compatible to existing HTML5 browsers to enable plugin-free playback on any platform.

H5Live works on all HTML5 browsers. If the “Media Source Extension” is available, we are using a low-latency delivery approach based on fragmented MP4. For iOS, H5Live is using a modified version of HLS with a unique low-latency mode, to allow playback with standard HLS/Safari setups (“ULL-HLS”).

H5Live is based on different modules:

- Live stream reader: connects to existing H264/AAC/RTMP live streams. nanoStream Cloud also supports WebRTC live sources based on UDP.

- Live stream segmenter / multiplexer: creates a unique live stream to connect to any HTML5 browser. Different to existing HLS or DASH segmenters, H5Live is based on frame-based delivery independent from H264 GOP length or file chunks

- Live stream delivery based on fragmented MP4 (fMP4): based on different sub formats like low latency HLS, working on all HLS devices with ultra-low latency (Safari on iOS included), or MSE/Websocket. Additional support for “dumb” devices like set-top boxes is available upon request.

- Buffer control to keep latency down in all network situations, even in unstable mobile networks.

How is H5Live different from HLS and DASH?

HLS and DASH both suffer from restrictions of file based segments which are pulled over HTTP requests. Due to the nature of HTTP and internet connections, the segment size cannot be reduced below 2 seconds easily without sacrificing performance and stability. As HLS and Dash is using at least 3 chunks, this leads to at least 6 seconds latency in standard HLS environments.

H5Live is fully compatible with HLS. However, it avoids these restrictions of file based segments of HLS and DASH by using frame-based segments with a fragmented MP4 continuous live stream.

What about WebRTC – is it used in H5Live?

WebRTC, the real-time communication technology promoted by Google was designed for peer-to-peer communication in small groups only. WebRTC by design uses UDP and RTP by default, with TCP based fallbacks. The video codecs are VP8, VP9 and H264. Audio is usually Opus, not AAC.

WebRTC is able to achieve very low latency, but usually does not operate well with live streaming environments based on RTMP, HLS and H264 and AAC.

nanoStream WebRTC.live closes this gap and can be used for live encoding and broadcast, but on the delivery side it is missing CDN and vendor support (e.g., it is not supported by Apple). H5Live is much better scalable and compatible to all browsers on all devices.

What is UDP-TS and how can it reduce latency?

UDP is a very simple low level internet protocol. Compared to TCP, it does not use a specific handshake and there is no guarantee of delivery as packets are not confirmed by the receiver. This potentially reduces complexity and allows creating low latency applications. However, for reliable live streaming applications, several application layers need to be added on top of UDP. This can be a “Transport Stream” which is a packet based multiplex format based on MPEG2 standards. Additionally, error correction (FEC) needs to be added. UDP-TS is also sometimes used in multicast applications and usually uses standard codecs like H264 and AAC, but there is no generally available application standard available for UDP (e.g. no browser support). with the exception of WebRTC, which may use it implicitly under the hood.

What else is important for low-latency live streaming?

The transport protocol is only part of the story: coding, packaging and multiplex create a complex communication system and both encoder and player, with buffering added in every step. Live streaming is nowadays often used in unstable environments like mobile networks, which require very dynamic control loops like buffer control, frame dropping or short-term adaptive bandwidth control. These technologies may have a higher impact on the user experience than the underlying transport protocol.

An end-to-end live streaming workflow: the H5Live Player and nanoStream WebRTC.live

Together, nanoStream WebRTC.live and H5Live form a powerful end-to-end live streaming workflow. With no plugins needed, the workflow enables ultra-low latency live streaming from encoding, through streaming and playback – directly within web browsers.

Why did we at nanocosmos decide to create H5Live?

Availability: H5Live is based on standard HTML5 technologies that are available in all browsers, and can be deployed on desktop and mobile platforms (iOS and Android).

Large-scale Distribution: WebRTC is not very well suited for large scale distribution and delivery worldwide. It does not integrate with common live-streaming delivery practices (CDN). Neither is it available on all browsers and OS: it can primarily only be used on Chrome and Firefox.

Cross-platform Integration: The streaming protocol is similar to HLS and DASH, but with a modified workflow to enable true ultra-low latency live streaming applications for the web.

Cloud-based Application: Our nanoStream Cloud end-to-end streaming solution makes live streaming applications adaptable to different streaming environments.

Live Encoding with WebRTC and Live Playback with H5Live: For live encoding, we created a WebRTC-Live Streaming Bridge, which can stream to any RTMP destination, CDN or nanoStream Cloud. In combination with the H5Live playback solution, you can create a full plugin-free, browser-based end-to-end live streaming application.

How can I add low-latency live streaming to native apps on any platform?

H5Live and WebRTC.live are plugin-free solutions for web browsers.

For native applications on iOS, Android, Windows, macos, you can use our nanoStream SDKs to create your own branded live streaming app easily.

What else do I need to know?

The good news is, you do not need all this technical background to get started!

nanoStream H5Live player covers the complexity for you and takes care about the right delivery format on your audience devices. Try yourself with nanoStream Cloud!

About nanocosmos

nanocosmos has been providing customized video solutions since 1998, with their nanoStream products for live encoding, streaming and playback now available cross-platform end-to-end from the camera to the viewer, for your brand.

nanocosmos blog

Why is low latency so important for your live streaming projects?

based on Executive Vision in StreamingMedia Magazine